SLAM SDK, vision libraries, RTOS and drivers for SensPro sensor hub DSPs and NeuPro AI processors

The Application Developer Kit (ADK) for Ceva-XM, SensPro2 and NeuPro streamlines the...

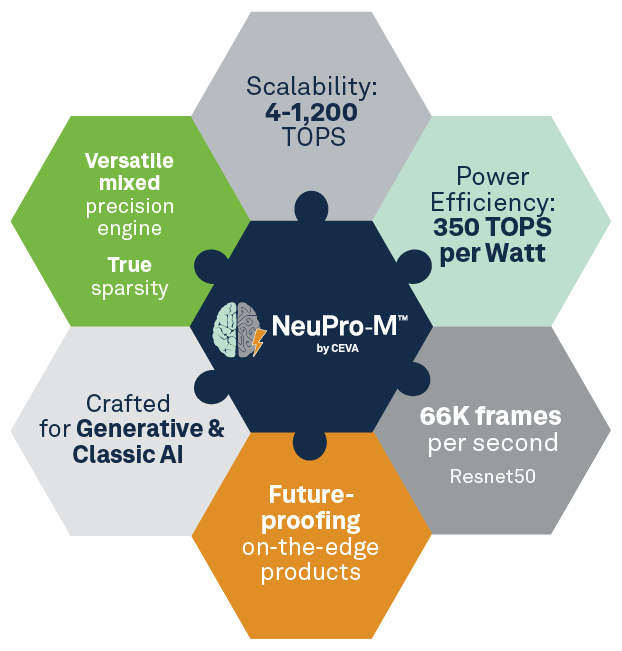

NPU IP family for generative and classic AI with highest power efficiency, scalable and future proof

Ceva-NeuPro-M redefines high-performance AI (Artificial Intelligence) processing for smart edge devices and edge compute with heterogeneous coprocessing, targeting generative and classic AI inferencing workloads.

Ceva-NeuPro-M is a highly power-efficient and scalable NPU architecture with an exceptional power efficiency of up to 350 Tera Ops Per Second per Watt (TOPS/Watt).

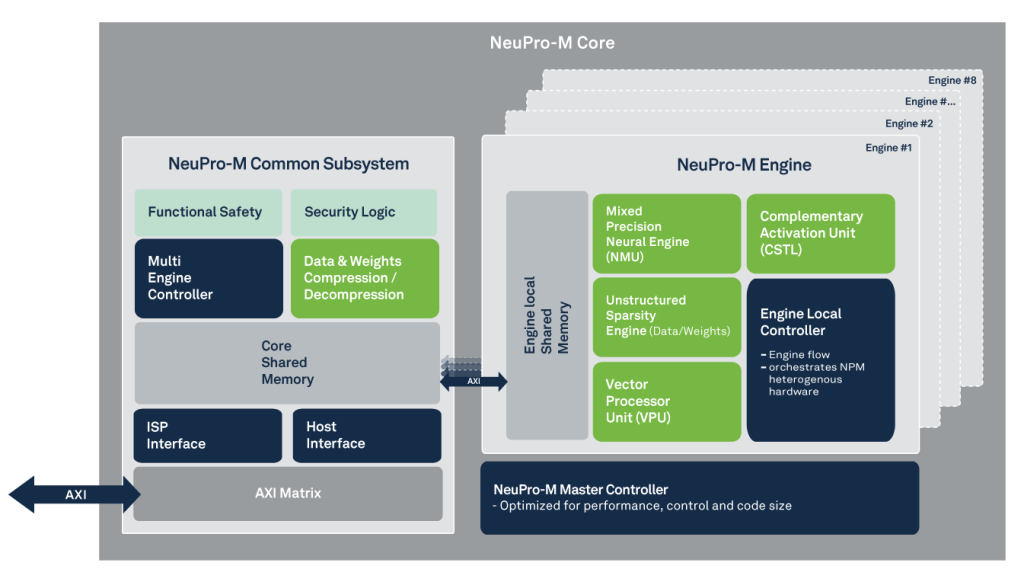

Ceva-NeuPro-M provides a major leap in performance thanks to its heterogeneous coprocessors that demonstrate compound parallel processing, firstly within each internal processing engine and secondly between the engines themselves.

Ranging from 4 TOPS up to 256 TOPS per core and is fully scalable to reach above 1200 TOPS using multi-core configurations, Ceva-NeuPro-M can cover a wide range of AI compute application needs which enables it to fit a broad range of end markets including infrastructure, industrial, automotive, PC, consumer, and mobile.

With various orthogonal memory bandwidth reduction mechanisms, decentralized architecture of the NPU management controllers and memory resources, Ceva-NeuPro-M can ensure full utilization of all its coprocessors while maintaining stable and concurrent data tunneling that eliminate issues of bandwidth limited performance, data congestion or processing unit starvation. These also reduce the dependency on the external memory of the SoC which the NeuPro-M NPU IP is embedded into.

Ceva-NeuPro-M AI processor builds upon Ceva’s industry-leading position and experience in deep neural networks applications. Dozens of customers are already deploying Ceva’s computer vision & AI platforms along with the full CDNN (Ceva Deep Neural Network) toolchain in consumer, surveillance and ADAS products.

Ceva-NeuPro-M was designed to meet the most stringent safety and quality compliance standards like automotive ISO 26262 ASIL-B functional safety standard and A-Spice quality assurance standards and comes complete with a full comprehensive AI software stack including:

- Ceva-NeuPro-M system architecture planner tool – Allowing fast and accurate neural network development over NeuPro-M and ensure final product performance

- Neural network training optimizer tool allows even further performance boost & bandwidth reduction still in the neural network domain to fully utilize every Ceva-NeuPro-M optimized coprocessor

- Ceva-NeuPro Studio AI compiler & runtime, compose the most efficient flow scheme within the processor to ensure maximum utilization in minimum bandwidth per use-case

- Compatibility with common open-source frameworks, including TVM and ONNX

The Ceva-NeuPro-M NPU architecture supports secure access in the form of optional root of trust, authentication against IP / identity theft, secure boot and end to end data privacy.

Benefits

The Ceva-NeuPro-M AI processor family is designed to reduce the high barriers-to-entry into the AI space in terms of both NPU architecture and software stack. Enabling an optimized and cost-effective scalable AI platform that can be utilized for a multitude of AI-based inferencing workloads

Self-contained heterogeneous NPU architecture that concurrently processes diverse AI workloads using mixed precision MAC array, True sparsity engine, Weight and Data compression

Scalable performance of 4 to 1,200 TOPS in a modular multi-engine/multi-core architecture at both SoC and Chiplet levels for diverse application needs. Up to 32 TOPS for a single engine NPU core and up to 256TOPS for an 8-engine NPU core

Future proof using a programmable VPU (Vector Processing Unit), supporting any future network layer

Main Features

- Highly power-efficient with up to 350 TOPS/Watt at 3nm

- Support wide range of activations & weights data types, from 32-bit Floating Point down to 2-bit Binary Neural Networks (BNN)

- Unique mixed precision neural engine MAC array micro architecture to support data type diversity with minimal power consumption

- Unstructured and structured Sparsity engine to avoid operations with zero-value weights or activations of every layer along the inference process. With up to 4x in performance, sparsity will also reduce memory bandwidth and power consumption.

- Simultaneous processing of the Vector Processing Unit (VPU), a fully programmable processor for handling any future new neural network architectures

- Lossless Real-time Weight and Data compression/decompression, for reduced external memory bandwidth

- Scalability by applying different memory configuration per use-case and inherent single core with 1-8 multiengine architecture system for diverse processing performance

- Secure boot and neural network weights/data against identity theft

- Memory hierarchy architecture to minimize power consumption attributed to data transfers to and from an external SDRAM as well as optimize overall bandwidth consumption

- Management controllers decentralized architecture with local data controller on each engine to achieve optimized data tunneling for low bandwidth and maximal utilization as well as efficient parallel processing schema

- Supports latest NN architectures like: transformers, fully-connected (FC), FC batch, RNN, 3D convolution and more

- The Ceva-NeuPro-M NPU IP family includes the following processor options:

- Ceva-NPM11 – A single engine core, with processing power of up to 32 TOPS

- Ceva-NPM12 – A 2-engine core, with processing power of up to 64 TOPS

- Ceva-NPM14 – A 4-engine core, with processing power of up to 128 TOPS

- Ceva-NPM18 – An 8-engine core, with processing power of up to 256 TOPS

- Matrix Decomposition for up to 10x enhanced performance during network inference

Block Diagram

Linley Microprocessor Report: Ceva-NeuPro-M Enhances BF16, FP8 Support

Ceva has updated its licensable Ceva-NeuPro-M design to better handle Transformers—the neural networks underpinning ChatGPT and Dall-E AI software and now finding application at the edge in computer vision. Architectural changes to NeuPro-M improve its power efficiency and can increase its performance sevenfold on models that can take advantage of the new features. Critical among these is the addition of BF16 and FP8 support to more NeuPro-M function units and improved handling of sparse data.