CEVA's 2nd Generation Neural Network Software Framework Extends Support for Artificial Intelligence Including Google's TensorFlow

LAS VEGAS, June 27, 2016 /PRNewswire/ -- CVPR 2016 -- CEVA, Inc. (NASDAQ: CEVA), the leading licensor of signal processing IP for smarter, connected devices, today introduced CDNN2 (CEVA Deep Neural Network), its second generation neural network software framework for machine learning.

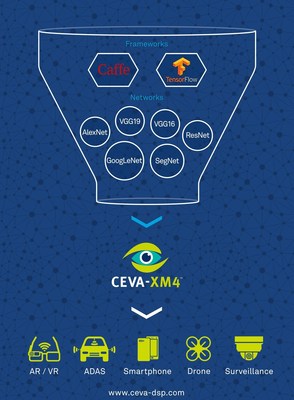

CDNN2 enables localized, deep learning-based video analytics on camera devices in real time. This significantly reduces data bandwidth and storage compared to running such analytics in the cloud, while lowering latency and increasing privacy. Coupled with the CEVA-XM4 intelligent vision processor, CDNN2 offers significant time-to-market and power advantages for implementing machine learning in embedded systems for smartphones, advanced driver assistance systems (ADAS), surveillance equipment, drones, robots and other camera-enabled smart devices.

CDNN2 builds on the successful foundations of CEVA's first generation neural network software framework (CDNN), which is already in design with multiple customers and partners. It adds support for TensorFlow, Google's software library for machine learning, as well as offering improved capabilities and performance for the most sophisticated and latest network topologies and layers. CDNN2 also supports fully convolutional networks, thereby allowing any given network to work with any input resolution.

Pete Warden, lead of the TensorFlow Mobile/Embedded team at Google, commented: "It's great to see CEVA adopting TensorFlow. Power efficiency is key to successfully harnessing the potential of deep learning in embedded devices. CEVA's low-power vision processors and CDNN2 framework could help a wide variety of developers get TensorFlow working on their devices."

Using a set of enhanced APIs, CDNN2 improves the overall system performance, including direct offload from the CPU to the CEVA-XM4 for various neural network-related tasks. These enhancements, combined with the "push-button" capability that automatically converts pre-trained networks to run seamlessly on the CEVA-XM4, underpin the significant time-to-market and power advantages that CDNN2 offers for developing embedded vision systems. The end result is that CDNN2 generates an even faster network model for the CEVA-XM4 imaging and vision DSP, consuming significantly lower power and memory bandwidth compared to CPU- and GPU-based systems. To see CDNN2 in action, click here.

Jeff Bier, founder of the Embedded Vision Alliance, commented: "Today, designers of many types of systems – from cars to drones to home appliances – are incorporating embedded vision into their products to enhance safety, autonomy and functionality. I applaud CEVA's initiative in enabling low-cost, low-power implementations of visual intelligence using deep neural networks."

Eran Briman, vice president of marketing at CEVA, commented: "The enhancements we have introduced in our second generation Deep Neural Network framework are the result of extensive in-the-field experience with CEVA-XM4 customers and partners. They are developing and deploying deep learning systems utilizing CDNN for a broad range of end markets, including drones, ADAS and surveillance. In particular, the addition of support for networks generated by TensorFlow is a critical enhancement that ensures our customers can leverage Google's powerful deep learning system for their next-generation AI devices."

CDNN2 is intended to be used for object recognition, advanced driver assistance systems (ADAS), Artificial intelligence (AI), video analytics, augmented reality (AR), virtual reality (VR) and similar computer vision applications. The CDNN2 software library is supplied as source code, extending the CEVA-XM4's existing Application Developer Kit (ADK) and computer vision library, CEVA-CV. It is flexible and modular, capable of supporting either complete CNN implementations or specific layers for a wide breadth of networks. These networks include Alexnet, GoogLeNet, ResidualNet (ResNet), SegNet, VGG (VGG-19, VGG-16, VGG_S) and Network-in-network (NIN), among others. CDNN2 supports the most advanced neural network layers including convolution, deconvolution, pooling, fully connected, softmax, concatenation and upsample, as well as various inception models. All network topologies are supported, including Multiple-Input-Multiple-Output, multiple layers per level, fully convolutional networks, in addition to linear networks (such as Alexnet).

A key component within the CDNN2 framework is the offline CEVA Network Generator, which converts a pre-trained neural network to an equivalent embedded-friendly network in fixed-point math at the push of a button. CDNN2 deliverables include a hardware-based development kit which allows developers to not only run their network in simulation, but also to run it with ease on the CEVA development board in real-time.

For more information on CDNN2, visit http://launch.ceva-dsp.com/CDNN2.

About CEVA, Inc.

CEVA is the leading licensor of signal processing IP for a smarter, connected world. We partner with semiconductor companies and OEMs worldwide to create power-efficient, intelligent and connected devices for a range of end markets, including mobile, consumer, automotive, industrial and IoT. Our ultra-low-power IPs for vision, audio, communications and connectivity include comprehensive DSP-based platforms for LTE/LTE-A/5G baseband processing in handsets, infrastructure and machine-to-machine devices, computer vision and computational photography for any camera-enabled device, audio/voice/speech and ultra-low power always-on/sensing applications for multiple IoT markets. For connectivity, we offer the industry's most widely adopted IPs for Bluetooth (Smart and Smart Ready), Wi-Fi (802.11 a/b/g/n/ac up to 4x4) and serial storage (SATA and SAS). Visit us at ceva-dsp.com and follow us on Twitter, YouTube and LinkedIn.

Video - http://www.youtube.com/watch?v=SXINFryLM3Q

Photo - http://photos.prnewswire.com/prnh/20160626/383448

Logo - http://photos.prnewswire.com/prnh/20120808/SF53702LOGO

SOURCE CEVA, Inc.