Wide angle cameras are hot in smartphones, cars, VR and surveillance, for convenience, cost or safety. Turning wide-angle, high-res input into pleasing and usable high-resolution output in real-time depends on a holistic solution with special optics, dedicated hardware and customized software.

Recent-release phones have three cameras, for the iPhone 11 a wide-angle lens, a telephoto lens and an ultra-wide lens. This is how makers are edging phone photography closer to professional photography – even aiming to match human vision. Arguably this area is now the primary differentiator in smartphones, to the point that makers compete for position in widely respected rankings. Not surprising – by the end of next year 50% of new phones are expected to have three or more cameras, for a market in Asia alone exceeding 800M units.

Among these, ultra-wide cameras are especially important for consumer favorite shots. You’ll have seen in specs they all boast their wide field of view (FOV), around 120 degrees. Note that the FOV of the human eye is around 135 degrees vertically and 200 degrees horizontally, so the phone makers are getting close to mimicking our eyes. Then again, we have our brains to figure out the optics. Phone makers need specialized optics, software and hardware to turn their ultra-wide-angle shots into pleasing pictures.

Phones can’t directly match the adjustable optics of a professional camera or the human eye, so must compensate through different cameras suited to different purposes. Good human interface controls (such as on the iPhone 11) provide a non-intrusive way to switch between these options for wide angle scene shots, selfies or groupies.

Why is ultra-wide imaging such a big deal? Most obviously you can take stunning landscape shots, the kind of images you can see directly (your eyes are much better than most cameras at ultra-wide angle). A little less obviously they’re also really good for close-in groupie shots – no need to scrunch people together so that everyone will fit in the frame.

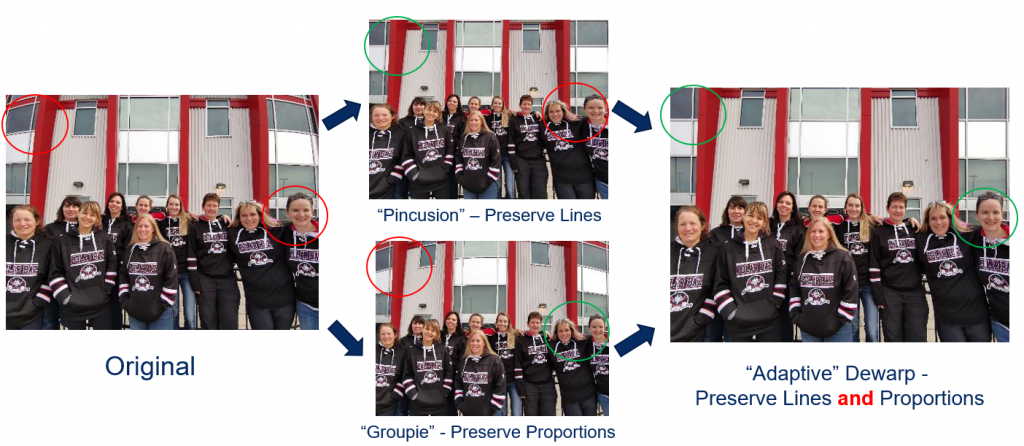

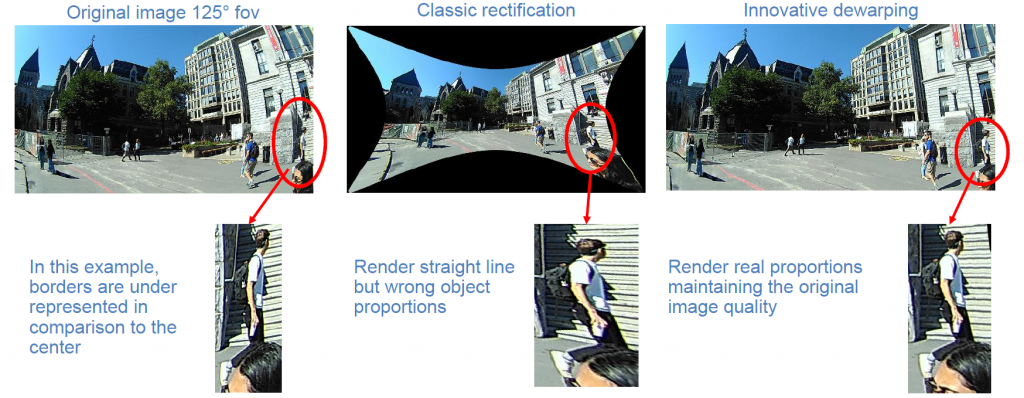

But when you have a small sensor and a wide-angle lens for a wide field of view, the raw image is heavily distorted. Straight lines are bent, and people’s bodies and faces are distorted, not exactly what you want to see in a selfie or groupie. Fixing this distortion needs to be fast, on the order of milliseconds, so the correction is effectively real-time as you’re looking at the picture.

It also needs to be adaptive. In standard dewarping for a wide field of view image, a large amount of the input field of view can be lost through cropping, defeating the original purpose. A more intelligent adaptive approach should retain the full field of view while also correcting straight line and proportions of bodies and faces. This isn’t through one “super” algorithm. It can be accomplished through intelligent application of different algorithms to different parts of the scene. This is something on which an OEM could differentiate, given an ability to selectively apply such corrections.

While phone cameras dominate unit volume, ultra-wide imaging has other and more practical applications. Many applications such as automotive and vacuum robot imaging systems want as wide a view as possible while also keeping cost down. Especially in automotive uses, it is essential to collect as wide an image as possible, even a full 360o view using just two cameras – you don’t want to miss that pedestrian stepping off the sidewalk. However speed of correction is critical. For these applications, a few ultra-wide cameras providing this kind of corrected imaging to downstream object detection can be the ideal solution.

VR/AR is another obvious application where you want a human eye field of view rather than a restricted camera field of view. But latency through the software must be negligible, since long latencies are known to contribute to motion sickness.

CEVA together with Immervision provides a hardware-independent high resolution, high frame-rate software solution with real-time response for adaptive dewarp and a power-optimized version for CEVA vision DSPs. You can learn more here.

Published on edge ai + vision ALLIANCE.

You might also like

More from Imaging & vision

Challenges in Designing Automotive Radar Systems

Radar is cropping up everywhere in new car designs: sensing around the car to detect hazards and feed into decision …

Transformer Models and NPU IP Co-Optimized for the Edge

Transformers are taking the AI world by storm, as evidenced by super-intelligent chatbots and search queries, as well as image …