An accepted reality for sensors is that they’re most effective when they work together. This is particularly true for SLAM – Simultaneous Localization and Mapping. SLAM is valuable for AR/VR, adjusting the scene to the user’s pose, and for drones and robots in collision avoidance, among many other applications. The SLAM market is expected to grow to $465M by 2023 at a healthy 36% CAGR, making it an exciting opportunity for most builders. One application may dominate through sheer size of the base platform market – SLAM on our cell phones for indoor navigation. GPS won’t work indoors, and beacon-based navigation will only work in areas with beacon infrastructure. SLAM can work any place that provides indoor maps, a low-cost expectation from most building managers. Making SLAM work here is a good example of a sensor fusion application, here to fuse the scene with user’s pose and motion as they are walking through the area.

(Source: CEVA)

(Source: CEVA)

An out-of-the-box implementation

I’ll start by describing how you would put together and test such a solution based on our CEVA SensPro sensor hub DSP hardware, together with our SLAM and MotionEngine software modules to condition and manage motion input. We need a camera and inertial measurement sensors, a host CPU and a DSP processor. We’ll use the CPU to host the MotionEngine and SLAM framework and a DSP to offload heavy algorithmic SLAM tasks.

To simplify explanation, I’ll start with OrbSLAM, a widely used open-source implementation. This performs three major functions. Tracking does (visual) frame-to-frame registration and localizes a new frame on the current map. Mapping adds points to the map and optimizes locally by creating and solving a complex set of linear equations. Loop closure optimizes globally by correcting at points where you return to a point you have already been. This is accomplished through solving a huge set of linear equations.

Some of these functions can run very effectively inside the host app on a CPU core, together with the control and management functions unique to your application. Some must run on the DSP to be practical or for competitive advantage. For example, tracking might manage one frame per second (fps) on a CPU, where feature extraction can burn 40% of the algorithm runtime. In contrast, a DSP implementation can manage 30 fps, a resolution which will be important for fine-grained correlation between video and IMU.

There are easily understood reasons for this advantage. A DSP implementation provides very high parallelism and fixed/floating-point support, critical in tracking and in linear equation solving. This is complemented by a special instruction set to accelerate feature extraction. An easy link between the host and the DSP lets you use the DSP as an accelerator, to offload intensive computations to the SensPro.

Fusing IMU with Vision

We provide two critical components – visual SLAM using our CEVA-SLAM SDK product, and the CEVA MotionEngine software that solves IMU input very accurately for three of the six degrees of motion freedom. Fusing IMU and video information depends on an iterative algorithm which is typically customized to suit application requirements. This last step correlates visual data with motion data to produce refined localization and mapping estimates. CEVA provides proven visual SLAM and the IMU MotionEngine as a solid foundation for your fusion algorithm development. The algorithmically intensive functions making up most of this computation will run fastest on a DSP, such as our SensPro2 platform.

Testing the prototype

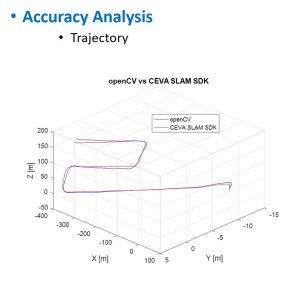

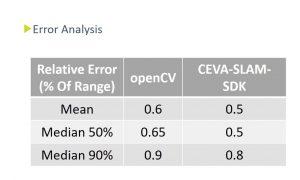

Once you’ve built your prototype platform, how will you test it? There are several SLAM datasets available. Kitti is one example, EuroC is another. In the example below I show an accuracy comparison between the OpenCV implementation and our CEVA-SLAM SDK implementation. You will want to do similar analyses on your product.

Mix your own

As I mentioned earlier, there are many ways to build a SLAM platform. Maybe you don’t want to start from OrbSLAM or you want to mix in your own preferred or differentiated algorithms. These possibilities are readily supported in the SensPro sensor hub DSP.

You can learn more here. Contact us to learn more about applications in this space!

Published in Electronic Design.

You might also like

More from Imaging & vision

Challenges in Designing Automotive Radar Systems

Radar is cropping up everywhere in new car designs: sensing around the car to detect hazards and feed into decision …

Transformer Models and NPU IP Co-Optimized for the Edge

Transformers are taking the AI world by storm, as evidenced by super-intelligent chatbots and search queries, as well as image …

What is AI Anomaly Detection and Why it needs Explainable AI (XAI)?

Anomaly detection is the process of identifying when something deviates from the usual and expected. If an anomaly can be …