As we shipped our 100 millionth product, we paused to reflect on the milestone and look back at the past 15+ years of innovation in the motion sensor industry. We discovered that many of the early predictions made about how sensors will become core to the modern user experience have since come to pass.

Thursday, February 21, 2019

We’re proud to have reached a major milestone recently: our customers have delivered more than 100 million products with Hillcrest software worldwide. This major achievement for our company gave us reason to pause and reflect on the exciting 15-year journey that both our company and the motion sensing industry has taken. We’ve grown up in this industry, watching trends develop and fads come and go.

Nicholas Negroponte, the famous MIT professor and founder of the MIT Media Lab, made predictions all the way back in 1984 about the future of tech. He was already criticizing the computer mouse, looking for a touch-based interface that was more refined. He also talked about the rise of touch-sensitive displays that allow you to interact with a screen using a very low-tech stylus (your fingertip). Fast forward 30+ years and touch-sensitive devices are now an integral part of everyday life.

Another futurist, Paul Saffo, predicted the important role that sensors would have in the evolution of “infotech” in 1997. In his essay, “Sensors: The Next Wave of Infotech Innovation,” Saffo explained that the 1980s were about Processing–capabilities and speed–and the 1990s were about Access–to data, to the burgeoning internet, and to each other. The 2000s, he predicted, would be about Interaction–and that sensors would play a pivotal role in our increasingly digital lives.

In the early 2000s, as Saffo’s predicted Age of Interaction was in its infancy, the founder of Hillcrest Labs watched his niece run back and forth from an IM chat on the desktop computer in one room to the living room where the TV was. He thought, there just has to be a better way to interact with these devices. Why can’t both be controlled by the same user interface?

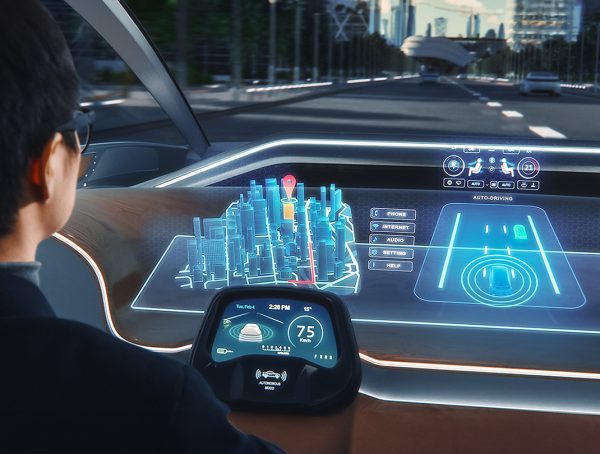

This led to Hillcrest Labs’ creation of a new TV user experience and the first MEMS-based motion sensing remote control, which spawned what is now Hillcrest Labs’ MotionEngine™ software–used by LG to enable the point-and-click simplicity of its Magic Remote for certain LG Smart TVs.

Mobile Tech: The Burst into the Marketplace

Technology doesn’t just change at the dawning of a new decade. “What defines each decade,” Saffo wrote, “is not the underlying technology invention, but rather a dramatic favorable shift in price and performance that triggers a sudden burst in diffusion from lab to marketplace.”

We’ve certainly observed this in the world of mobile consumer tech. Cell phones were only starting to become commonplace in the early 2000s, and the iPhone hit the market in 2007. But by 2010, while the iPad was just making its debut (one that would revolutionize mobile computing), mobile tech had already become so ubiquitous that “app” was named the word of the year by the American Dialect Society. Since the introduction of the iPhone, mobile computing has seen staggeringly fast growth with mobile system-on-chip processors now delivering desktop-level performance with multi-core processor architectures that have enabled new applications and user experiences.

According to Pew Research, smartphone saturation stands at an impressive 91% of US adults aged 18-49, up significantly from just 35% in 2011. All of this consumer demand set the stage for the explosion of sensors into everyday life. The first sensing applications were mostly for research or very application-specific functions. But with the smartphone, sensors enabled more contextually-aware devices and delivered more natural experiences and interfaces, fueling customer adoption.

Motion Sensing Goes Mainstream

The first smartphones used touch sensors as a way to replace the standard keyboard. Early on, they started to incorporate accelerometers and gyroscopes to determine a phone’s position in 3D space, magnetometers to enable a compass, and cameras (typically one per phone). Today’s smartphone has many sensors: gyros, accels, mags, and not just one but two cameras, as well as proximity sensors to disable the touchscreen when the phone is in a call so you don’t make a cheek-dial, ambient light sensors to automatically adjust screen brightness, fingerprint sensors, barcode/QR readers, heart rate sensors and more.

As smartphone demand made sensors cost effective, they started to be used in all types of devices: fitness trackers, smart watches, drones, robotic cleaners, smart appliances, game controllers and more. Cost-effective sensors also made it possible to add a wider selection of sensor types to devices, allowing each product generation to get smarter and to better match consumer habits and preferences.

But even before the tidal wave of smartphones flooded the market, sensors were already being used in consumer products to create more natural user experiences. In 2005, Hillcrest Labs launched a new user experience for TV that was differentiated, in part, by a simple handheld motion controller that used an accelerometer, gyroscope and our specialized software to translate simple hand motions in 3D space to control a cursor on a TV screen. Our proprietary orientation compensation technology allowed for more natural movement by allowing users to comfortably hold the remote control and intuitively move a cursor around on the TV screen without worrying about the control’s orientation. Based on this, we developed the Loop, a unique handheld motion controller, and a TV-based OS (operating system) called HoME. This ultimately led to several major TV manufacturers, including LG, to adopt our MotionEngine software to provide users with an intuitive way to browse content on Smart TVs.

Soon after, in 2006, the Nintendo Wii hit the market with a handheld motion controller that changed video gaming forever. The key to the Wii Remote wasn’t just the combination of its optical sensors and 3-axis MEMS accelerometer (and the later addition of a gyroscope in the Wii Remote Plus), but the ability to deliver a new user experience to consumers at a price point acceptable to the mass market. The Wii console ultimately sold more than 100 million units before being discontinued.

The gaming industry continues to be a hotbed of motion sensing innovation with the advent of augmented and virtual reality (AR/VR) applications. In 2012, Palmer Luckey leveraged his early success at VR headset design into the creation of the Oculus Rift. In the years since then-18-year-old Luckey first launched his Kickstarter for Oculus Rift, the industry has made vast improvements in the underlying technology to address motion sickness, deliver better real-world to virtual-world tracking, and provide lower “motion-to-photon” latency to enable more true-to-life experiences.

One technology that’s key to the huge advancements in AR/VR is the IMU (inertial measurement unit). These multi-axis sensors collect data from accelerometers, gyroscopes, and sometimes magnetometers, using sophisticated sensor fusion algorithms to intelligently “blend” the data from all of these sensors into contextual information about the environment. When paired with a camera, IMUs can be used in both VR headsets and controllers to enable low-latency motion sensing for a smoother and more realistic virtual experience.

And IMUs are opening the way for evolution in many different product categories. For example, robotic vacuum cleaners originally used a “random walk” approach that led to early cleaners moving around a room with no pattern (often missing spots and getting lost). Today, many robotic cleaners are using single-axis gyros to enable dynamic heading for better directionality, but user challenges still persist, limiting adoption.

At Hillcrest, we see an opportunity for the next wave of motion sensor innovation in robotic cleaning. By adding a multi-axis IMU, robots can tell when they’re tilting off a flat plane, such as when they climb a chair leg, which allows them to move without getting stuck or continue to drive straight despite transitioning between floor types. IMUs also deliver additional contextual data that will let robot manufacturers improve their AI decision making. By using IMUs in combination with other sensing systems like Visual SLAM or LiDAR, the possibilities seem endless.

Looking to the Future

Futurists saw that sensors would lead a trend in consumer devices toward interactivity, and independent of those predictions, Hillcrest Labs was founded to make such interactivity possible.

We are excited to have a hand in developing the sensor technology that will shape the applications of the next decade. We see enormous potential for handheld motion control as a yet-untapped green field. We believe that more natural motion capture can unlock a world of new user improvements and enhancements to applications that haven’t even been thought of yet. And beyond that, we see opportunities for unique applications of motion sensing technology like 3D audio (for a realistic, immersive experience) and device attitude alignment and monitoring.

Much like cell phones before them, autonomous systems (and not just robots) are going to become the norm. They will be used in all types of consumer and industrial applications, ranging from predictive maintenance and asset tracking to autonomous cars, fully-connected smart cities and beyond. In fact, MIT named “sensing cities” one of the breakthrough technologies of 2018.

The key to all of these future applications? Many different types of sensors being used in combination with sensor fusion to process the raw inputs into useful information. Many robots today already have half a dozen sensors or more. Industrial automation is starting to rely on sensors to automate what were once manually-intensive processes like preventive maintenance, using accelerometers to measure vibrations and microphones to detect the clicks, squeaks or hisses that signal a need for repair.

Human interaction with our surroundings will become more natural and more intuitive as sensors make previously-inanimate everyday objects not only interactive, but even predictive. This is what will enable the IoT “sensing cities” of the not-so-distant future, with autonomous vehicles and smart…well, everything.

You might also like

More from Sensor fusion

Evaluating Spatial Audio – Part 1 – Criteria & Challenges

We here at Ceva, have spoken at length about spatial audio before, including this blog post talking about what it …

Gyro Pen Revolution: From Passive Stylus to Motion Pen

Imagine you’re a school teacher using the latest technology to engage your students. The class thrives on interactive presentations and …