Immersive 3D/spatial audio – combined with XR/360 video – can transport you away from your home and into the middle of a lush forest – with the sound of twigs crunching below you, a deer running off in the east, and the flapping of wings as your eyes follow a cardinal flying away.

Accurate head tracking helps deliver a true-to-life UX, and understanding which factors are key in evaluating a solution will help you navigate this growing industry.

Critical Factors in Head Tracking

For ease of understanding, here’s a summary of the key factors in head tracking.

- Latency: This is the delay from an audiovisual source to when the source is perceived by the user. For the purposes of this post, we break it down into two parts.

- Audio Input Latency: This is the delay from the source of the audio to when it is heard by the user.

- Head Tracking Latency: This is the latency from when your head moves and when the 3D audio processing changes to adapt to the new head direction.

- Head Tracking Accuracy: For this article, we are discussing 3-DOF orientation-only head tracking, and not 6-DOF head tracking with position and orientation. Accuracy refers to the measured difference between real-world motion and its corresponding position in an XR environment. If the sensors (and their algorithms) are not accurate, you might be able to track head motion in real-time but it isn’t going to reflect consistently in the virtual environment.

- Head Tracking Smoothness: This describes how cleanly and unnoticeable 3D Audio transitions are when changing directions. You want to create an XR experience that’s free from jumps. Output that changes abruptly breaks immersion and, in the case of games, can even result in death.

Factoring in the Tests

Head Tracking Latency

Latency is not the easiest to test without proper measurement equipment, but it can be done subjectively. This study at the Audio Communication Group at TU Berlin showed that the average detection level for human subjects was 108ms, with an absolute detection threshold between 52 and 73ms for single sound sources. To clarify, theirs is a Total System Latency, which describes the time difference between a physical and the corresponding output of the speakers. The study concluded that an average of 108ms could pass before a human noticed the change in motion. And when sounds were played from a single source, it was even more noticeable.

When listening to prerecorded music or other audio-only content, this latency doesn’t influence anything. With a recorded video, however, if the display doesn’t delay the picture to account for the audio input latency, then there could be lip-sync issues. With video games, you don’t want to delay the picture because the picture latency is important to gaming performance, so having a low audio latency is important to keep the sound in-sync with the game. There will always be some degree of latency, but the key is to minimize it so that this delay isn’t detected by the user.

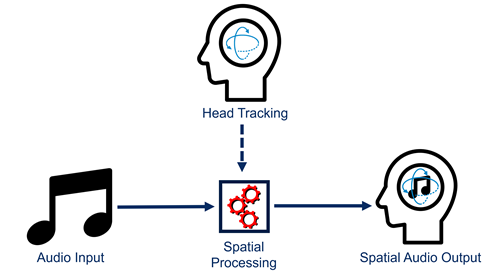

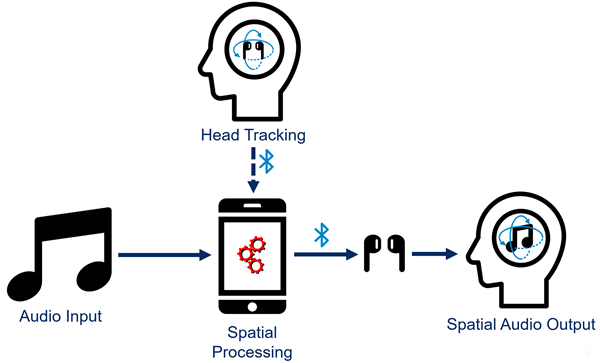

In a spatial audio system, head tracking data is mapped by its spatial audio input via spatial processing, generally applying a Head Related Transfer Function (HRTF) and possibly some reverb or other room simulation. With this processing in place, there are a couple common ways to implement the spatial audio systems.

If you run the spatial processing algorithms on the audio device itself, only the audio input latency is increased due to the wireless communication. Since there is no wireless link in the head-tracking path, the head tracking latency will still be very low. This is a key advantage to having both the spatial processing and the head tracking performed on the same device.

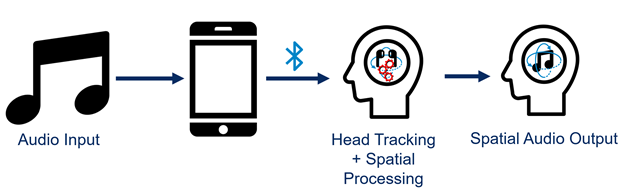

Another approach is to run the spatial audio processing on a mobile device like a phone. Head tracking information is sent from the hearable to the mobile device, which processes it, then pushes it back for the user. Due to the extra communication link, this creates more head tracking latency than the previous setup. For transmitting the audio from the phone to the earphones, the Bluetooth latency will depend on the audio codec used. The faster codecs can have latency as low as 50-80 ms, but the more common ones can be 170-270 ms. The head tracking data will add typically another 50-100 ms of latency.

With understanding of the spatial audio system and the knowledge of human latency detection research, we can get a rough idea of how good, or bad, the latency for a spatial audio system is. To test latency, try listening to something higher in frequency. Lower frequency noise doesn’t have much directionality (this is why stereo systems generally only have one subwoofer).

A good sound source for testing latency would be a continuous sound that localizes well. Ideally, this sound source is a mix of multiple frequencies as well, but for the sake of explanation, consider a higher pitched sound that’s constantly playing. The higher frequency(/ies) allows for easier identification, and the constant tone allows you to notice distinct changes in the audio image.

Imagine you had a headset with head-tracking latency of 200ms. And let’s say that for good audio rendering, we want the audio image to not move more than 5 degrees. That means that the user would need to always move slower than 25 deg/s. To help imagine this, this would be 3.6 seconds to move your head 90 degrees. This is fairly slow, and you’ll likely move faster than this normally.

For testing, if you turn your head 90 deg in about 1/4 second, you’ll be moving at 360 degrees per second. A 200 ms latency will mean the source will move 72 degrees, but it will only be in the wrong spot for ~200 ms. With a continuous sound as your reference, the latency should be clearly noticeable.

Accuracy, Precision, and Smoothness

Accuracy relates to how close motion is to the real world/true answer. Precision relates to how consistently you can get the same answer. Without the use of a full 9 axis solution with a magnetometer, true accuracy cannot be measured. However, due to the nature of audio technology using magnetic drivers, and the changing environments of the user, a full 9-axis head tracking solution is not realistic. This is why most spatial audio hardware does uses accelerometers and gyroscopes only.

Testing precision and smoothness is a bit tricky to do, but depending on your spatial audio software, you should be able to see how well it reacts. Clean voice audio (like a podcast) is probably the best to test these criteria with. In a podcast, the speakers are in stationary positions, so whichever way you turn your head, their sound should come from the same spot. And when you move your head, the change in 3D audio from one position to another should occur without noticeable steps or changes in volume or quality.

The gyroscope sensors in 3D/spatial audio headsets are liable to drift, which lowers the overall precision of the headset. Software will grant you multiple options: a manual recentering, slow stabilization, or fast stabilization.

If you accept the drift as is, you will notice over time that the people are moving about the room slowly. Maybe they were right in front of you initially, but now they’re off center slightly to the left. This is not ideal. You can manually recenter the device by hitting a designated button (physical or software) to say “I am looking straight ahead again” and reset the drift. However, it will still build up over time. A slow recenter takes advantage of the fact that your head is looking in the direction of the content. By making this assumption, it can reset the gyroscope drift over a period of minutes. Fast recentering is using the same idea, but moving it relatively immediately, in a matter of seconds.

Which method of auto-centering is ideal depends on the use case. If you’re looking in the same direction at the screen, slow recentering is ideal as it ignores the occasional glance away from the screen and keeps the center where you spend your time. At the start of a session, resetting the ‘forward’ direction helps give it a guide and prevents you from having to wait a few minutes for the algorithm to adjust. However, if you’re playing a game on multiple screens at home, an action packed game on your phone, or physically walking around (a park, for example), the direction you look will be changing with relatively high frequency. Fast recentering is more ideal to keep up with these types of situations.

As you move your head around and listening to a podcast, try to notice how well the voices are tracked in the room, and how smoothly their position changes when it drifts (or if you notice drift at all). The main component of spatial audio smoothness is how cleanly it transitions from one location to another. Whether you move your head slowly or quickly, clean imperceptible changes in audio location is a sign of a criminally smooth algorithm. If you notice jumps or distinct quantization of the audio as you move your head around, it could be a sign of a jump correction, or the sensor/system not translating motion smoothly.

The world of 3D/spatial audio is becoming mainstream as big tech companies create products integrating it. The more products there are, the more your need to know how to pick the best. Even though the evaluations I’ve described above are largely subjective, I hope by explaining the ideas behind them and the logic behind the tests have helped you navigate your way through this… space (weak, I know). However, if you want some visualizations on the importance of head tracking latency or maybe some more information on HRTFs, check out this webinar VoD. If this post or the webinar sparked your interest, send us a note to see which CEVA products best support your project.

Published on AudioXpress.

Missed our 3D/Spatial Audio webinar? Watch on demand here

You might also like

More from Audio / Voice

LE Audio and Auracast Aim to Personalize the Audio Experience

We live in a noisy world. At an airport trying to hear flight update announcements through the background clamor, in …

AI Audio for Voice Enhancement: Deep into the Deep – Part 3

It is Tomer again with more about ENC! Throughout this journey, we've laid the foundation with an introduction and explored …

Environmental Noise Cancellation (ENC): Part 2 – Noise types and classic methods for Speech Enhancement

In part 1, we discussed some important concepts related to sound processing and environmental noise cancellation that are essential to …