In our day to day lives, technology driven experiences fall into different categories in terms of how much we understand the technology and the origins of it. For example, let’s consider something that a sizable chunk of the world population experiences and then something that all living creatures (and inanimate things) experience.

About 63% of the world population access the internet [Source: Statista] and a majority of them experience the internet through webpages. As such, the general population refers to the internet and the web pages interchangeably. Of course, those in the technology arena do know the difference but may or may not remember when and where the world wide web (WWW) was invented. Without its invention, the internet experience of today will not be the same.

100% of all living creatures experience something automatically and that is their “mass”, interchangeably and inaccurately referred to as “weight” by the general population. Of course, those who remember their physics know the difference. While material mass is taken for granted in general physics, there is a field of physics that tries to explain what gives materials their mass. The existence of the mass-giving field was confirmed when the Higgs boson particle was discovered.

The organization that is behind both the WWW invention and the Higgs boson discovery and many other remarkable inventions is CERN. The World Wide Web was invented in 1989 by Tim Berners-Lee while working at CERN. The existence of the mass-giving field was confirmed in 2012, when the Higgs boson particle was discovered at CERN.

CERN is currently working on an interesting particle physics project by leveraging CEVA’s Edge AI technologies and solutions. This post will discuss that project, why and how that came about, and present some benchmark results of CEVA’s Edge AI technologies against alternative solutions.

What is Edge AI?

Edge AI refers to artificial intelligence applications deployed on devices away from the data centers (the cloud) and closer to the consumers (the edge). It is called so because the computation is done near the edge of the network rather than in a data center. Edge AI techniques are being leveraged across a number of applications and have many benefits such as performance improvements, data privacy, reduced power consumption, and more.

What is Particle Physics?

Particle physics is the branch of physics that deals with the properties, relationships, and interactions of subatomic particles. Everything in the universe is made of particles. The standard model of particle physics is the theory describing the electromagnetic, weak nuclear and strong nuclear forces and classifying all known elementary particles. But a quark, which is a type of elementary particle and a fundamental constituent of matter cannot be explained using this model. Quarks combine to form composite subatomic particles called hadrons. Protons and neutrons are the most stable among the known hadrons.

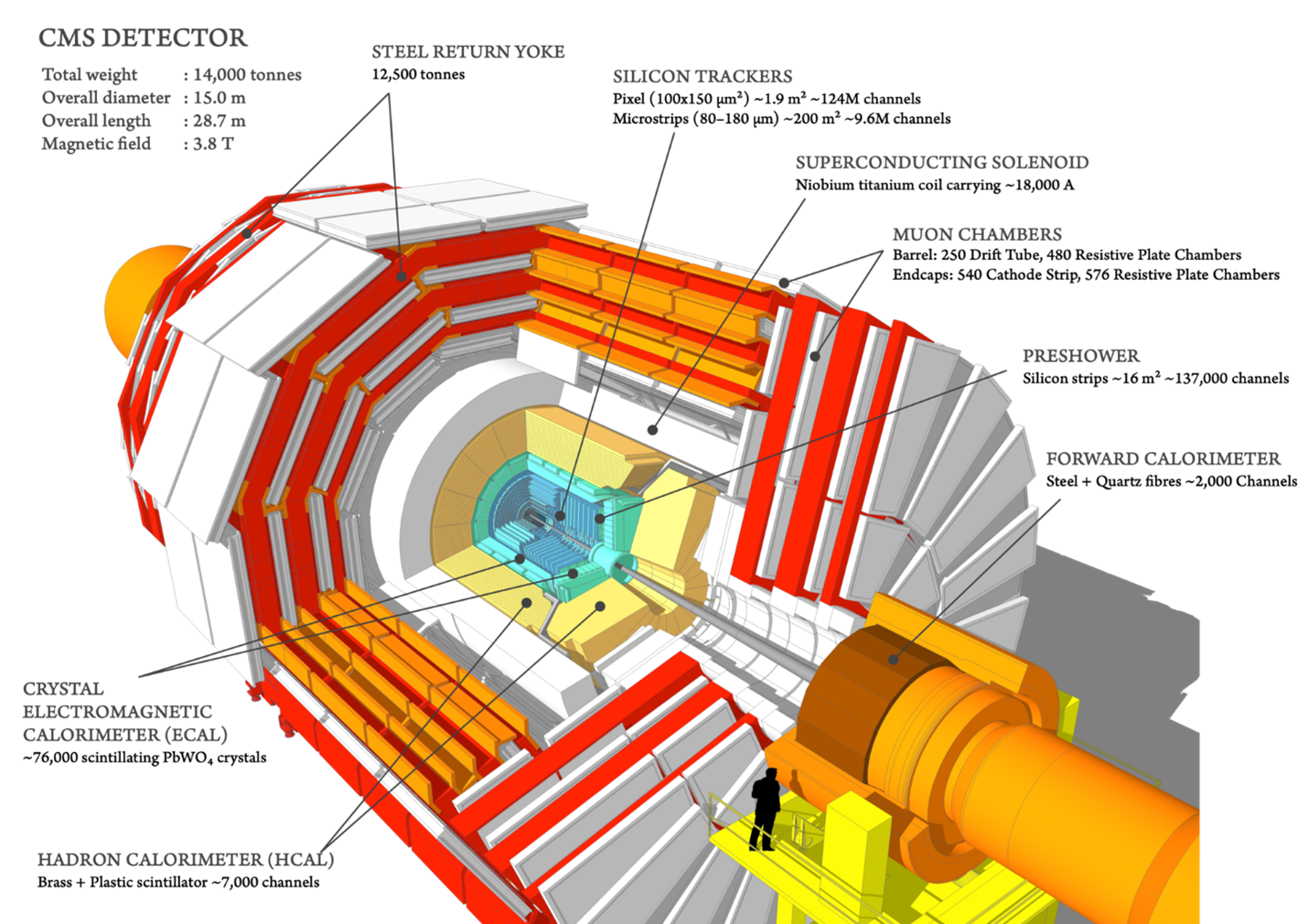

When the universe began, all the particles sped around at the speed of light because the particles had no mass. Stars, planets and subsequently life formed only after particles gained mass. The Higgs boson field associated with the Higgs boson particle is what gives the particles their mass. As such, this discovery is of huge significance in the field of particle physics. The story goes that Nobel Prize-winning physicist Leon Lederman referred to this particle as the “Goddamn Particle” to highlight how difficult it was to detect it. CERN is home to the Large Hadron Collider (LHC), which is the world’s largest and most powerful particle accelerator. The LHC consists of a 27-kilometre ring of superconducting magnets with a number of accelerating structures to boost the energy of the particles along the way. At a few points along the ring collisions occur. At these points, building sized detectors are located in order to analyze the collisions. One of these detectors is the CMS experiment detector. This detector is 28.7 meters long, 15 m in diameter, and weighs about 14,000 tons. Over 4,000 people, representing 206 scientific institutes and 47 countries, form the CMS collaboration who built and now operate the detector.

How do Edge AI and Particle Physics Intersect?

While the industry is abuzz with news about the use of AI in many consumer oriented applications, not many discuss the use of AI in particle physics. In practice, AI has augmented and improved particle physics studies for many decades. For example, neural networks which are fundamental to AI algorithms were used in the discovery of the Higgs boson particle. AI gives the physicists a better ability to reconstruct particles from the hadron collision debris and interpret the results.

Edge AI solutions are also known for their power efficiency compared with data-center oriented solutions. High power consumption is a known issue with server farms due to the intensive HW compute and the 24×7 operational nature. Particle physics experiments also run 24×7 and are highly data and compute intensive, making them very bandwidth and power hungry. An Edge AI hardware based solution that can compress the huge amounts of data for running particle physics experiments is very attractive for power-efficiency reason as well.

CERN’s Particle Physics Project

CERN uses the world’s largest hadron collider and other complex scientific instruments in their projects. The experiments typically run on a 24×7 basis and the particle collisions generate huge amounts of data that is too much to store for later processing. The amount of raw data from each crossing is approximately 1 megabyte, which at the 40 MHz crossing rate would result in 40 terabytes of data a second, an amount that the experiment cannot hope to store, let alone process properly. The full trigger system reduces the rate of interesting events down to a manageable 1,000 per second. As most of the generated data are not of value, the AI processing algorithms need to efficiently and effectively process the data on-the-fly to decide which of the collisions are of interest. The hardware solutions that implement the AI algorithms need to be high-performing and extremely power efficient.

The LHC operates at a nominal proton-proton collision rate of 40MHz. The trigger system reduces the collision rate by two phases:

- x400 in Level 1

- x100 in Level 2

Performance, Power and Scalability Requirements

Current solution takes too much compute time and power to process the generated data. CERN also expects to increase the collision rate by four-fold in the near future. At the same time, the processing algorithm’s latency in Level 2 is expected to increase by twelve-fold. They need a better, scalable solution on both performance and power consumption.

CEVA’s Edge AI Solution

CERN wanted to reduce the bit representation of the neural network down to 2-bit in order to reduce bandwidth and latency. This required dedicated hardware to implement such a solution. However, no efficient AI hardware out in the market supported two-bit multiplication. After exploring various solutions out in the market, and thanks to the endorsement and sponsorship of the Israel Innovation Authority (IIA), CERN decided to work with CEVA to address their requirements.

CEVA is a leading licensor of wireless connectivity, smart sensing technologies, and integrated IP solutions for a smarter, safer, connected world. They provide Digital Signal Processors, AI engines, wireless platforms, cryptography cores and complementary software for sensor fusion, image enhancement, computer vision, voice input and artificial intelligence.

CEVA suggested a deep learning solution to do both detection and classification of Jet Particles. In particle physics, a jet is a narrow cone of hadrons and other particles produced by the hadronization of a quark. It was agreed upon that CERN will develop the network compression algorithms while CEVA will develop a low-bit AI DSP core.

CEVA experimented with hardware for Binary Neural Networks (BNN) and Ternary Weight Networks (TWN). As part of the exploration done with the SensPro DSP, CEVA evaluated the use of 2bit (Ternary representation: -1, 0, 1) and 1bit precision (Binary representation: 0,1). A BNN acceleration block was designed as part of the SensPro core in order to offload 8×2(DataxWeights) and 2×2 convolutions. Typical neural network quantization achieves x4 compression by implementing arithmetic calculations in 8-bit fixed point precision. But as already noted, CERN’s extreme latency requirements called for 2-bits for weights. Data was maintained in 32-bit floating point although they plan to switch to 8-bit fixed point in the future.

The “images” that were input to the Object Detection Network were obtained by projecting the lower level detector measurements (Level 1) of the emanating particles onto a cylindrical detector (calorimeter). A rectangular image was then obtained by unwrapping the inner surface of the calorimeter. A calorimeter is an apparatus for measuring the amount of heat or energy involved in a chemical reaction or other process. The Jet images were interpreted with calorimeter cells as pixels, where pixel intensity maps the energy deposit of the cell. The x-axis of the image was the angle of collision and the y-axis was the energy of the cell.

When developing an Edge AI solution, it is important to understand which neural network topologies to work with to implement the processing algorithms. CEVA provided that guidance and also advised CERN on how to represent the data and weights correctly to ensure the deployment will be smooth with minimal error. CERN established a Quantization Aware Training algorithm that took into account all relevant inputs from CEVA regarding actual hardware deployment. Quantization Aware Training helps train neural networks for lower precision deployment, without compromising on accuracy.

Benchmark Results of CEVA’s SensPro-based Edge AI Solution

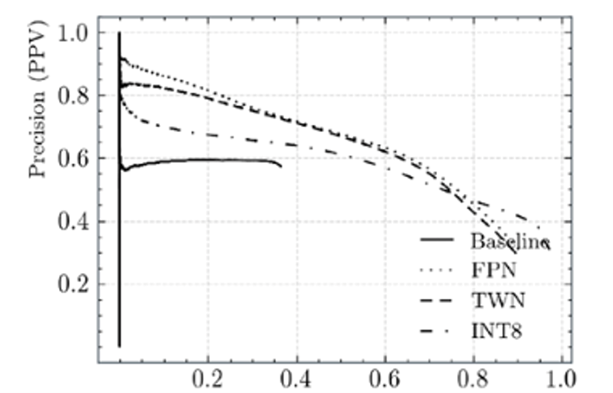

The results surpassed all existing algorithmic solutions. Referring to the precision-recall curves below, Precision (PPV) measures how accurate the predictions are while Recall (TPR) measures the quality of the positive predictions. Collectively, they determine how well the found set of jets corresponds to the set expected to be found.

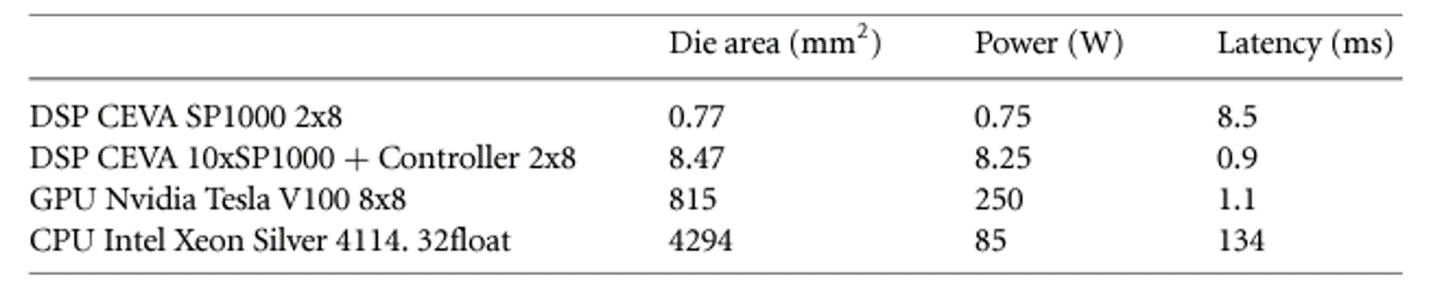

CEVA’s DSP cores were able to outperform both GPU and CPU solutions. Refer to the Table below to compare how the cores performed on die area, power and latency. The second row of the table shows an apples to apples comparison of a CEVA SensPro-based solution against the Nvidia Tesla V100 GPU core.

Summary

As covered in this blog, Edge AI solutions are not just for consumer-oriented edge device applications. They can be an effective approach even for applications that look into the very origins of our universe. That certainly was the case with CEVA’s SensPro-based Edge AI solution developed for CERN, the premier research institution in the field of particle physics. You can explore how you could benefit from many of CEVA’s Edge AI solutions by visiting CEVA’s website.

You might also like

More from Imaging & vision

Challenges in Designing Automotive Radar Systems

Radar is cropping up everywhere in new car designs: sensing around the car to detect hazards and feed into decision …

What is AI Anomaly Detection and Why it needs Explainable AI (XAI)?

Anomaly detection is the process of identifying when something deviates from the usual and expected. If an anomaly can be …