Director of Automotive Market Segment, CEVA. Jeff is utilizing his fifteen years of experience in the automotive industry to expand CEVA’s imaging & vision product line into a variety of automotive applications. He holds a B.Sc. in Electrical Engineering and a Masters of Business Administration from Michigan State University.

Debate on whether electronic architectures in cars should be centralized, distributed, or hybrid.

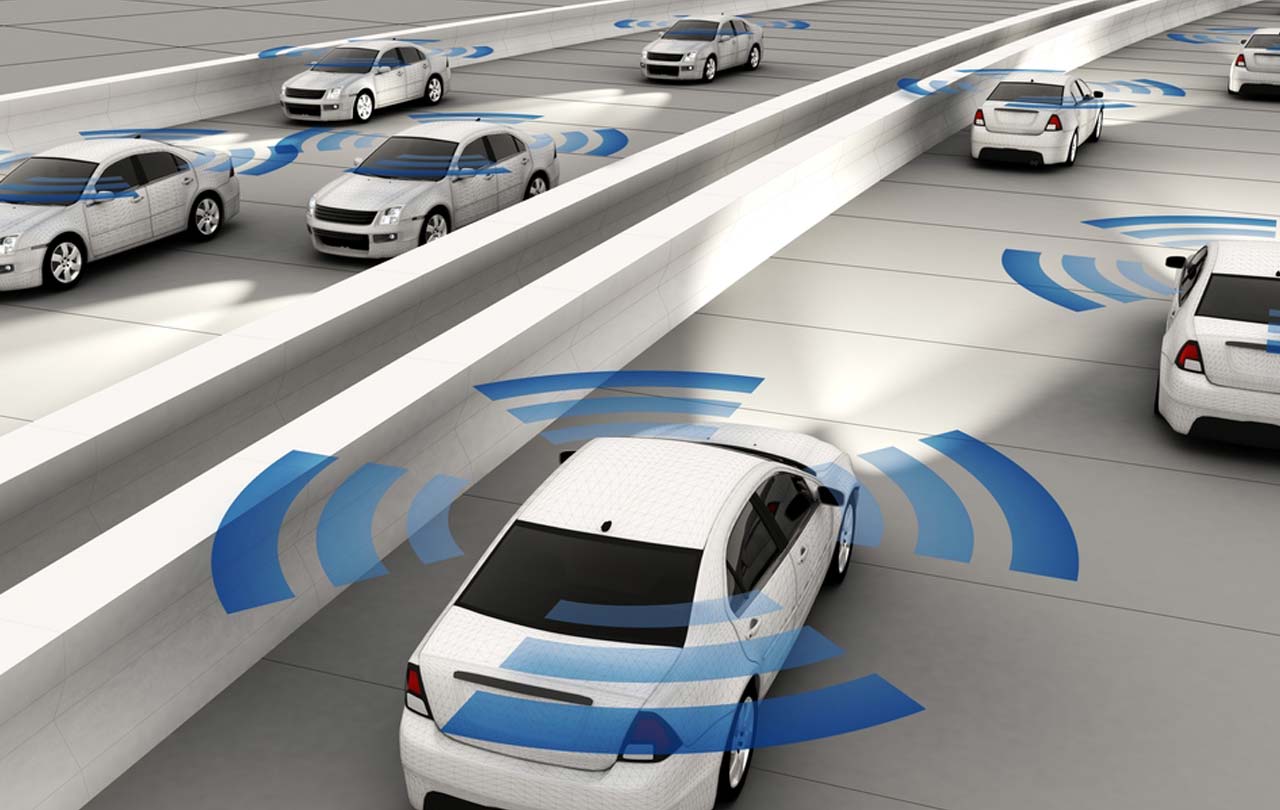

After returning from the AutoSens and Auto.AI conferences in Europe it was obvious to me that the recurring debate on whether electronic architectures in cars should be centralized, distributed, or perhaps should be a hybrid of these two is still very much in question. As we continue to add more safety features to cars today we tend to take the “add a feature, add a box” approach and as these stand-alone safety features become pieces of an automated driving system there needs to be move to a more disciplined architecture approach.

Automotive sensors are becoming more challenging.

For these more automated systems our natural inclination in these cases is to centralize, hence the announcements you will see for end-to-end electronics (E3) from Audi, BMW and others. Put all the intelligence in one (or a few) central processors and have “dumb” sensors and actuators around the car connect to that hub. Some also argue that this simplifies safety and security management – put all your smarts in that hub and manage the hub very carefully.

But of course, it’s never quite that easy. You may even have a sense that this is a replay of the cloud/edge debate, especially when you think about machine learning (ML), safety/security and power. Maybe we should push more capability into the “edge” nodes in the car – all those sensors. In part this is simply due to how many sensors we are now seeing in cars – say 12 cameras, 8-12 radars and 2 LIDARs. Those sensors are generating a huge amount of data. The centralized hub argument would push all that raw data to the hub for processing. But that traffic requires a network supporting many Gbps, centralized compute able to handle >500TOPs and burning as much as a kilowatt.

Then we must wonder about the safety claim. Amid all that centralized traffic and compute, how much can we guarantee response times to critical events? And how do we engineer redundancy into that system? Duplicating the hub and the networks would be expensive. And what is the fallback if the central system fails? Such a solution doesn’t seem ideal for limp-home options or even perhaps critical safety responses.

On the other hand, putting all the smarts in the sensors can’t be the right solution either – that’s back to the box per feature approach. But as we learned in other edge devices, we don’t want the sensors to be dumb either. This is especially true of vision-related sensors. Raw traffic from a vision sensor can be massive. It makes sense to condense that as much as possible at each sensor; do the basic object recognition, edge detection, etc. at the camera and let the central processor handle sensor fusion on those object lists.

An obvious plus is that networks from the sensors to the hubs don’t need to support raw data traffic rates. Which also means that power associated with communication will be greatly reduced. And the hub doesn’t need the same kind of performance since it now can deal with object lists rather than raw data. There’s also a safety argument. When intelligence is distributed, there’s no single point of failure. Parts of the system can break, and you can still limp home. Moreover, each smart sensor can afford to focus more on redundancy in its own functions so even if it is compromised, it can fail gracefully as a part of the whole system.

In this approach, the functions that are centralized are fusion and higher-level analytics on object lists from the sensors, path planning (driving or guiding the car to lane centering or between obstacles) and decision making (should the car slam on the brakes).

So, problem solved, make all the sensors relatively smart and have a lower bandwidth network, right? Not so fast. First, smarter sensors make the car more expensive. And repairs become more expensive; a fender replacement now carries the added cost of multiple built-in sensors. There’s another problem. When you put all the smarts in a sensor, you eliminate the possibility of doing new and creative things at the hub that you could do if you also had access to the raw data. Some cameras may serve multiple purposes – to provide a backup view for example but also for (ML-assisted) automated parking. In other words, the hub would both like both the raw data and the reduced data.

Point being, this is not a settled problem. I think most automakers would like a solution somewhere in the middle, but where you place that middle is still very much up for debate. Which means that solutions for smart sensors need to provide the smarts, while also being cost-effective and power-effective. These solutions need to fit end-to-end architectures which lean to centralization as much as those which lean to distribution. And they need to support fail-safe operation wherever it might be required, at the sensor and at the hub.

CEVA provides flexibility, low power and cost-effectiveness as an embedded solution to support all these needs. The CEVA NeuPro neural net processor can be used both a central processor and at edge nodes and the CEVA-XM6 vision processor provides the foundation for object recognition. You can learn more about how these platforms can be used in automotive applications HERE.

The article was published in Sensors Online.

You might also like

More from Automotive

Automotive Architectures Push ADAS System Architectures

Recent analyst reports reveal expectations that processing for ADAS system and Infotainment systems will expand significantly over the next five …

Buckle Up for More Mandated Driver Assistance

Autonomous vehicles will be a great thing – someday. But governments and regulators don’t want to wait until ‘someday’ to …