Computer vision and computational photography are an intrinsic part of the Internet of Things (IoT) arena where cameras and sensors reign supreme. This blog offers a quick overview of how computer vision technology is transforming the most crucial markets in 2016: mobile, automotive, security and surveillance, and drones.

Mobile

The digital camera in smartphones is by far the most highlighted and differentiated feature compared to others. Smartphone camera is most commonly used as the sole camera for consumers and they expect getting similar image quality as point-and-shoot cameras or even better. We also see that the front facing camera for selfie is becoming a social trend.

During 2014 we saw several smartphones that include dual cameras. HTC One M8 was the first one back in Feb 2014 to introduce dual cameras with resolution of 4MP and 2MP while the front facing camera was 5MP used for taking selfie. You may ask yourself why we need dual camera as the primary camera.

Well, in the case of M8, the secondary camera is not used to take any pictures, it is used as a sort of a rangefinder. Its job is to create a depth map of your scene, which allows you to add high-quality rendered effects, such as background blur (bokeh effect) or refocus your images after the shot was taken. It can even allow for 3D-like photos with an extra layer of depth, which is visible when you tilt the phone.

Since then we saw many other smartphones following the dual camera trend with even higher resolution.

All the above smartphones include dual camera as the primary camera. This was changed when LG introduced to the market V10 smartphone equipped with dual 5MP front facing cameras. This interesting two-lens setup allows the V10 to combine images using a smart algorithm for a wider-angle selfie shot without the need of using a selfie stick. You can get standard 80-degree selfies with one camera, or wide 120-degree shots using both cameras. The ability to take group selfies without a selfie-stick has never been easier.

The front-facing cameras will take the selfie craze to a new level. There is also a lot of speculations that the upcoming Samsung Galaxy S7 and iPhone 7 might be equipped with dual-camera hardware. However, that entails challenging tasks such as how to combine two image streams and perform registration in real-time. Next, how to carry out distortion correction in real-time for a 120-degree field of view.

Then, there are challenges like video stabilization, hybrid zoom, low light and super-crisp resolution. Mobile consumers are demanding visual quality equivalent to DSLRs, and that involves features like fast autofocus, full HD and multi-frame processing at 30fps and even 60fps. That, in turn, will lead to a dramatic increase in processing power while keeping power consumption very low.

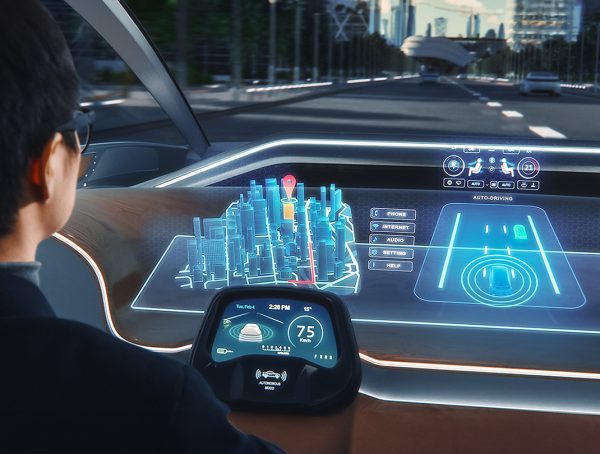

Automotive

Automotive electronics is the second largest growth market for computer vision chips after mobile. There is a dramatic increase in safety requirements amid the drive behind advanced driving assistance systems (ADAS) products. Government regulations around the world are also pushing for more security hardware in vehicles.

There could be cameras outside cars to prevent car-pedestrian accidents and inside cars for monitoring driver behavior (if he or she is feeling tired or sleepy) or kids activities on the backseat. Likewise, car insurance companies are also pushing for a camera-centric ecosystem, so that they can make the decision based on undeniable facts.

Not surprisingly, therefore, technologies like ADAS could lead to three to 10 always-on cameras in cars in the near future. And these cameras will need to be smart enough to make efficient decisions in real-time. They simply can’t afford to send the data to the cloud and wait for the response.

We also see demand for “always-on” systems that are located inside the car and performing face recognition. Drivers will not be able to turn on the engine unless they pass the face recognition authentication. These “always-on” systems require operating at very low power efficiency.

Then, there is autonomous driving, which will require a lot of smart cameras. It’s still a long way to go, but it’s paving the way for safety and convenience in driving experience now, largely through the introduction of smart cameras.

Security and Surveillance

Security and surveillance devices are time- and mission-critical. They can’t wait for the response from the cloud, so there is an increasing need to make cameras smarter and reduce reliance on bulky monitor rooms.

Furthermore, computer vision tasks like motion detection have to be done in a smart manner, and that makes it imperative to move video analytics to the camera. It will also save the extra cost of large control rooms and minimize dependence on expensive cloud servers. Camera processing is becoming cheaper, and that improves their ability to respond in real-time while providing a substitute for expensive control room monitoring.

Smart Camera Reduces Reliance on Bulky Monitoring Rooms and Expensive Cloud Servers

It’s worth noting that security cameras are moving toward 4K resolution, so it won’t be practical to store all that data that security cameras produce day and night. Storage, as well as analysis in the cloud, is an expensive proposition. Here, the smart camera will only triggered when there is an irregular behavior, saving the costs of storing huge amounts of data.

Drones

Drones are becoming smarter by the day. However, they face the challenge of video stabilization caused by shaking of the drone motor. Drones use gimbal to manage vibration and this drone accessory costs more than $200.

A robust computer vision solution with additional processing can compensate video stabilization in real-time and eliminate the extra cost of $200 or more straightaway. Moreover, computer vision features enable drones recognize objects through complex software algorithms and achieve a situational awareness to avoid object collision.

Then, there are “Follow me” and “Point of interest” features such as DJI Phantom-3 that boast 4K high-resolution and image enhancement. According to a recent study from BCC Research, the global drone market is expected to grow from $639.9 million in 2014 to $725.5 million in 2015. The market is further expected to grow to $1.2 billion by 2020.

CEVA-XM4: Profile of a Vision Processor

The growth markets outlined above clearly show the need for a dedicated vision DSP to carry out computer vision tasks faster and in a power efficient manner. The CEVA-XM4 intelligent vision processor has been developed from grounds up for the computer vision applications such as video stabilization, digital zoom, super resolution and image refocus.

The CEVA-XM4 vision processor takes advantage of pixel overlap in image processing by reusing same data to produce multiple outputs, which enhances processing capability, reduces power consumption, and saves external memory bandwidth and frees system buses for other tasks.

CEVA-XM4 enables multi-app processing for gesture and face detection, emotion detection and eye tracking

It’s CEVA’s fourth-generation imaging and vision processor IP that brings embedded systems closer to human vision and visual perception. The CEVA-XM4 vision processor offers up to 8x performance gain and 2.5x power savings compared to the firm’s predecessor vision core.

For more information on computer vision technology and products:

You might also like

More from Automotive

Challenges in Designing Automotive Radar Systems

Radar is cropping up everywhere in new car designs: sensing around the car to detect hazards and feed into decision …

Futureproofing Automotive AI to Manage Lifetime Cost

Cars and trucks are expected to continue their 10– to 20-year lifetimes for the foreseeable future, with corresponding implications for …

Automotive Architectures Push ADAS System Architectures

Recent analyst reports reveal expectations that processing for ADAS system and Infotainment systems will expand significantly over the next five …